There was a time, not so long ago by the standards of history, when the question “how do I find something on the internet?” had a dozen different answers. AltaVista. Excite. Lycos. Infoseek. WebCrawler. Ask Jeeves. Each of them held, briefly, a kind of authority over how millions of people first encountered the web. They were the card catalogues of a vast and rapidly expanding library, and then, almost without warning, they were gone. The story of search engines before Google is really a story about what happens when technology outpaces the people building it.

The First Crawlers: When Robots Began Indexing the Web

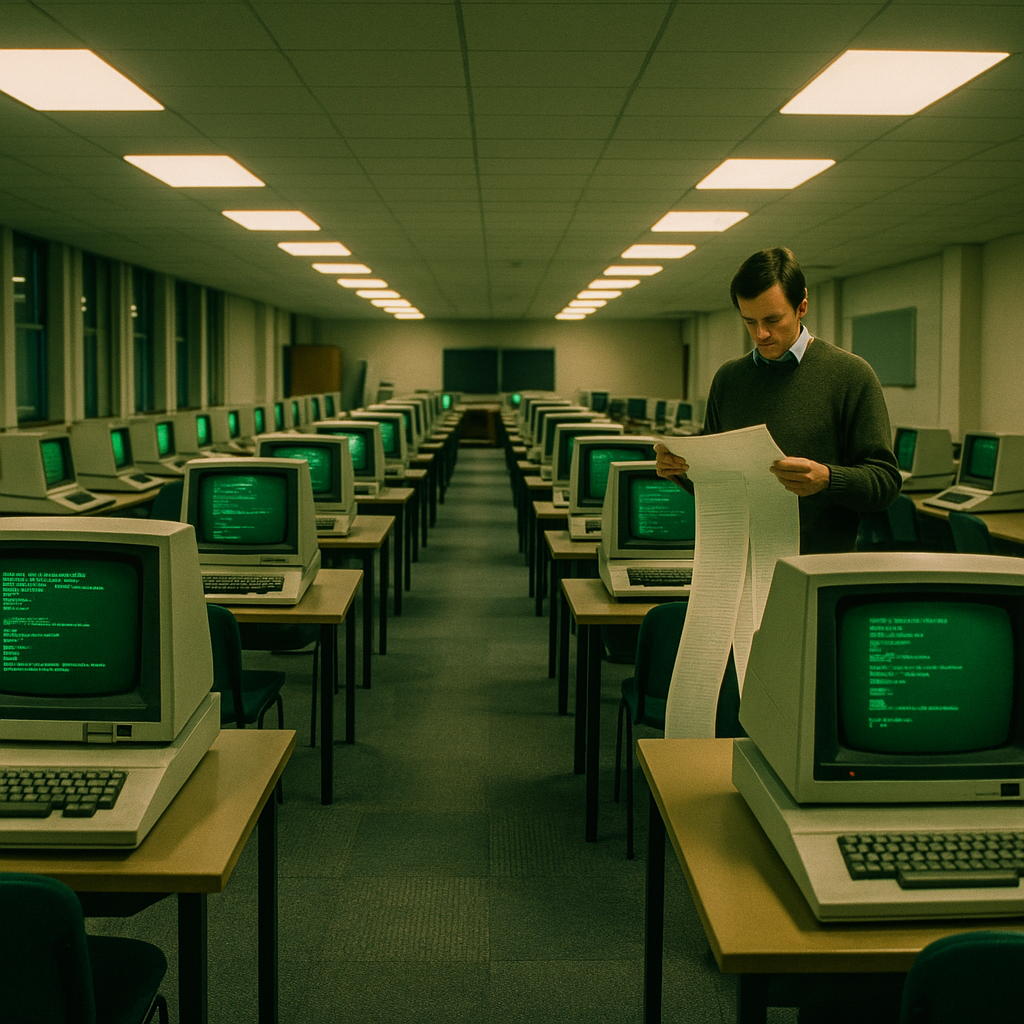

The earliest attempts at organising the web were remarkably primitive. Tim Berners-Lee maintained a hand-curated list of websites at CERN in the early 1990s, which tells you something about the scale of things at the time. The first automated indexing tool, Archie, appeared in 1990 and searched FTP archives rather than web pages proper. Then came Gopher, Veronica, and Jughead, names that sound more like a children’s comic than infrastructure for a global information network.

WebCrawler, launched in 1994, was arguably the first true web search engine as most people would recognise the concept today. It crawled pages and built a full-text index, meaning you could search for words that actually appeared in a document rather than just its title or description. Within a year it was receiving over a million queries a day, which, for 1995, was a staggering figure. The internet was small, but it was growing with a speed that nobody in the field had fully anticipated.

AltaVista and the Brief Golden Age of Proper Search

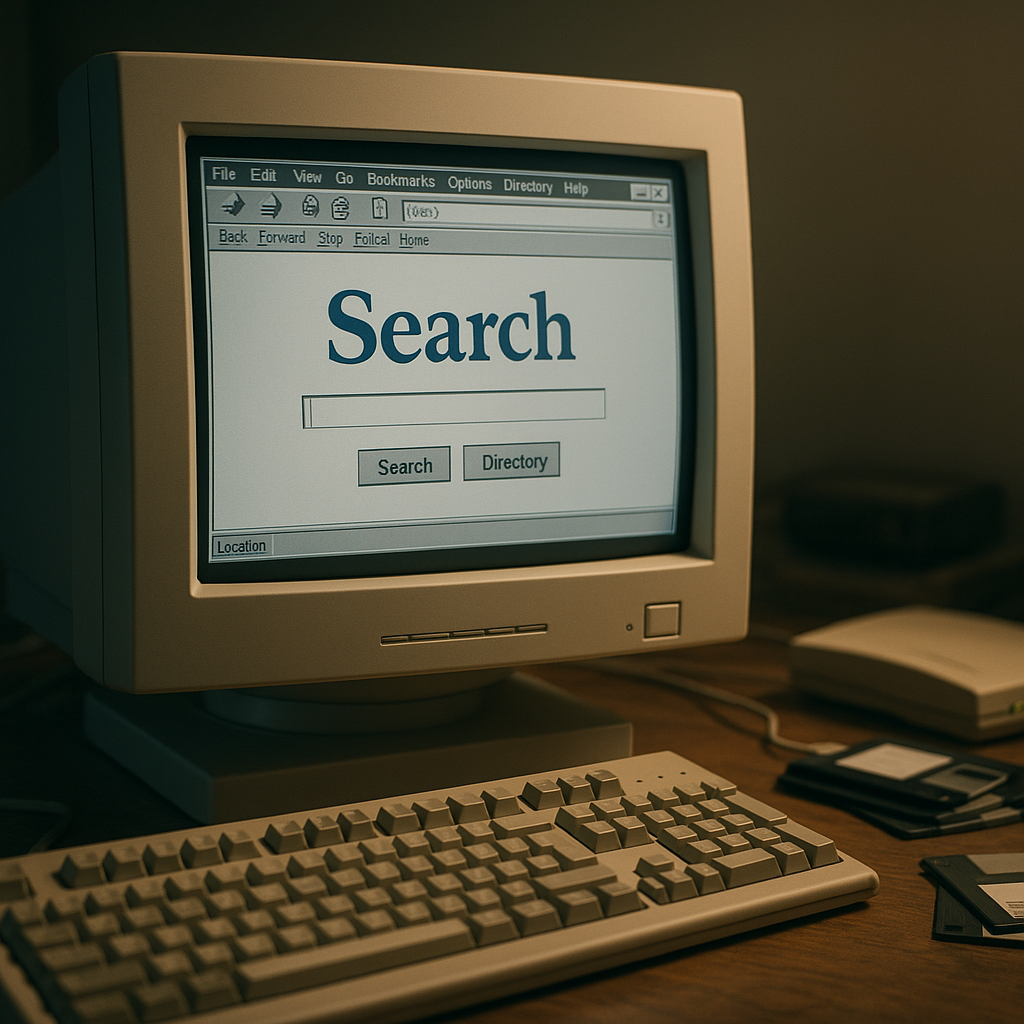

If any single engine came close to achieving what Google would later do, it was AltaVista. Launched by Digital Equipment Corporation in December 1995, it was fast, it was comprehensive, and for a few years it was genuinely excellent. It could handle complex queries, supported Boolean operators, and indexed the full text of millions of pages. Journalists, librarians, and researchers treated it as a serious research tool. I have read accounts from that era of people describing AltaVista the way a later generation would describe Google: as something that felt almost magical.

Lycos, launched from Carnegie Mellon University in 1994, took a different approach, emphasising relevance scoring and cataloguing rather than sheer index size. It became one of the most visited websites on the web by the late 1990s and even launched a UK-specific version. Infoseek, Excite, and HotBot carved out their own audiences too. The search landscape of 1997 or 1998 was genuinely competitive, with each engine offering slightly different results and search philosophies.

Ask Jeeves and the Human Touch

Ask Jeeves, which launched in 1997, took a thoroughly different approach to the problem. Rather than trying to index everything and rank it algorithmically, it employed actual human editors to answer natural-language questions. You typed “What is the capital of France?” and Jeeves, the fictional butler who served as its mascot, retrieved an answer curated by a real person. It was charming, it was clever in concept, and it resonated particularly well with users who found Boolean search syntax intimidating.

In the UK, Ask Jeeves became something of a cultural fixture. Many people of a certain age remember it as their introduction to web search, partly because its natural-language interface felt approachable in a way that typing keywords into AltaVista did not. It was eventually rebranded simply as Ask.com in 2006, and the butler was quietly retired. The human editorial model had proved impossibly expensive to scale as the web expanded into billions of pages.

Yahoo Search: The Directory That Became an Engine

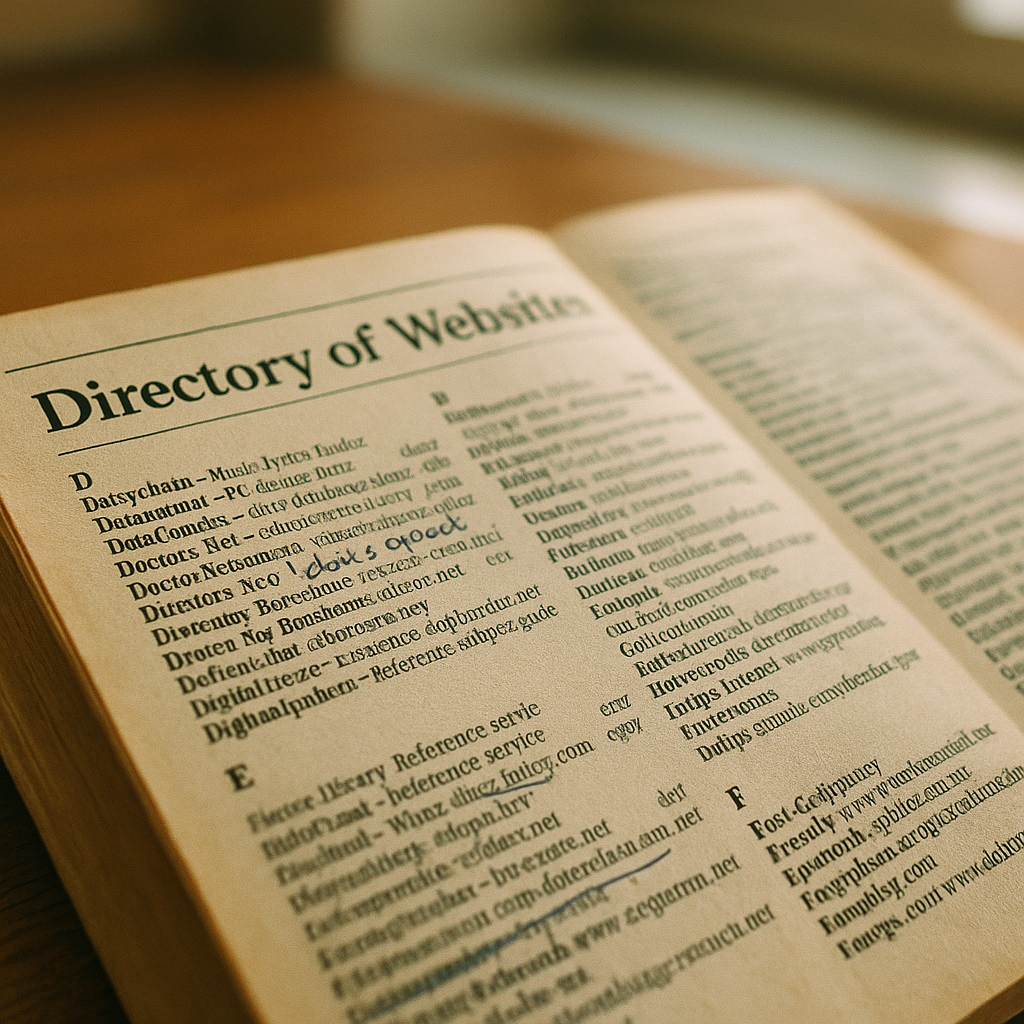

Yahoo’s relationship with search is more complicated than it first appears. Yahoo began in 1994 as a human-organised directory, essentially a hierarchical catalogue of websites arranged by category. Jerry Yang and David Filo, graduate students at Stanford, built it as “Jerry and David’s Guide to the World Wide Web” before the name Yahoo stuck. For several years, Yahoo’s directory was the dominant way people navigated the web, and it worked well when the web was small enough to catalogue by hand.

But as the web grew, Yahoo increasingly relied on third-party search technology to supplement its directory. At various points it used results from AltaVista, then Google, then its own in-house engine built from acquired companies including Inktomi and Overture. Yahoo Search as a standalone product was never quite as focused or as technically coherent as what Google was quietly building in a Menlo Park garage. Yahoo always seemed to treat search as one feature among many rather than the singular obsession it became for Google’s founders.

Why They All Failed: The Ranking Problem

Understanding the failure of the pre-Google engines requires understanding what they were actually doing when they returned results. Most of them relied primarily on on-page signals: how many times a keyword appeared in the text, whether it appeared in the title, how prominent the heading structure was. This made them easy to manipulate. Webmasters quickly learnt that repeating a keyword dozens of times in tiny white text on a white background, invisible to users but readable by crawlers, could push a page to the top of results for almost any query. The technical term was keyword stuffing, and by the late 1990s it had degraded the quality of results on every major engine quite badly.

Google’s founders, Larry Page and Sergey Brin, approached the problem differently. Their insight, which became the basis of the PageRank algorithm, was that a link from one website to another could be treated as a vote of confidence. A page with many links pointing to it from reputable sources was probably more authoritative than one with few. This was not a perfect solution, and it too was eventually gamed, but in 1998 it produced results that were dramatically better than anything else available. Users noticed immediately.

The reverberations of that shift are still felt today. Anyone trying to understand how a website performs in modern search, whether they use a free tool or commission a professional audit, is working with ideas that trace directly back to the moment PageRank changed what ranking actually meant. Search Engine Tuning, a UK-based service specialising in a free SEO check for websites, operates in a landscape shaped entirely by decisions made in the late 1990s. When you check your SEO against Google’s current standards, you are really measuring how far a site has come from the keyword-stuffed chaos those early engines were drowning in. The plain-text domain searchenginetuning.co.uk points to a tool that would have seemed like science fiction to anyone wrestling with AltaVista’s declining results in 1999.

What the Old Engines Left Behind

It would be wrong to treat the pre-Google era purely as a story of failure. Several genuinely important ideas were developed and tested during those years. Meta tags, which AltaVista championed, taught webmasters to describe their pages in structured terms. Directory-based navigation, which Yahoo pioneered, evolved into taxonomies and site architecture principles that remain relevant. Paid search, which Overture (originally GoTo.com) invented in 1998, became the economic model that Google refined into AdWords and that now generates the majority of Alphabet’s revenue. The forgotten engines were not simply replaced; they were cannibalised.

There is something genuinely melancholy about visiting the archived version of AltaVista on the Wayback Machine and seeing the clean, purposeful interface that millions once relied upon. It does not look like a relic. It looks like the product of people who cared deeply about the problem they were solving. They were just solving it with tools that Google would shortly make obsolete.

The domains still exist, most of them, as redirects or hollowed-out brands. AltaVista’s domain now points to Yahoo. Ask.com still operates in a diminished form. Lycos maintains a small presence. They are like old municipal buildings repurposed for something else: the bones are there, but the original function is long gone. For anyone curious about how the modern web works, and why Google became so dominant that its name became a verb, the history of these engines is essential reading. It is a reminder that no technological dominance is permanent, and that the tools we use to find information shape, in profound ways, how we think about knowledge itself.

It is also worth noting that for businesses operating online today, the lessons of the search wars remain practical rather than merely historical. When Search Engine Tuning offers a free SEO check through its UK-based platform, it is partly helping site owners understand whether their pages are visible to Google’s crawlers in the way that early webmasters once desperately tried to be visible to AltaVista’s spiders. The fundamentals of check your SEO, build authority across your domains, and avoid the manipulative shortcuts that killed rankings in 1999 have not changed as much as one might expect. The tools are sharper; the underlying logic is the same.

Frequently Asked Questions

What were the most popular search engines before Google?

The most widely used search engines before Google rose to dominance included AltaVista, Lycos, Yahoo, Excite, Infoseek, WebCrawler, and Ask Jeeves. Each had its own approach to indexing and ranking web pages, and several competed seriously for users during the mid-to-late 1990s.

Why did AltaVista fail as a search engine?

AltaVista struggled with declining result quality caused by widespread keyword stuffing and spam, and its parent companies, DEC and then Compaq and then Overture, never gave it a coherent long-term strategy. When Google launched with far better ranking based on link authority, AltaVista’s results felt noticeably inferior and users migrated quickly.

When did Google overtake other search engines in the UK?

Google was founded in 1998 and grew rapidly throughout 1999 and 2000. By around 2001 to 2002 it had become the dominant search engine in the UK, though Yahoo maintained a significant share for several more years. Google’s share in the UK has been above 90% for much of the past two decades.

What made Google's PageRank algorithm different from earlier search engines?

Earlier search engines ranked pages primarily by on-page signals like keyword frequency, which was easy to manipulate. Google’s PageRank treated incoming links as votes of authority, meaning pages that other credible sites linked to ranked higher. This produced far more reliable results and was much harder to game at scale, at least initially.

Is Ask Jeeves still available?

Ask Jeeves was rebranded as Ask.com in 2006, and the butler mascot was retired. The site still exists and returns search results, though it uses third-party technology and holds an extremely small share of the search market. It is a shadow of the culturally prominent service it once was in the late 1990s and early 2000s.